Talking trust with Wikipedia Founder Jimmy Wales

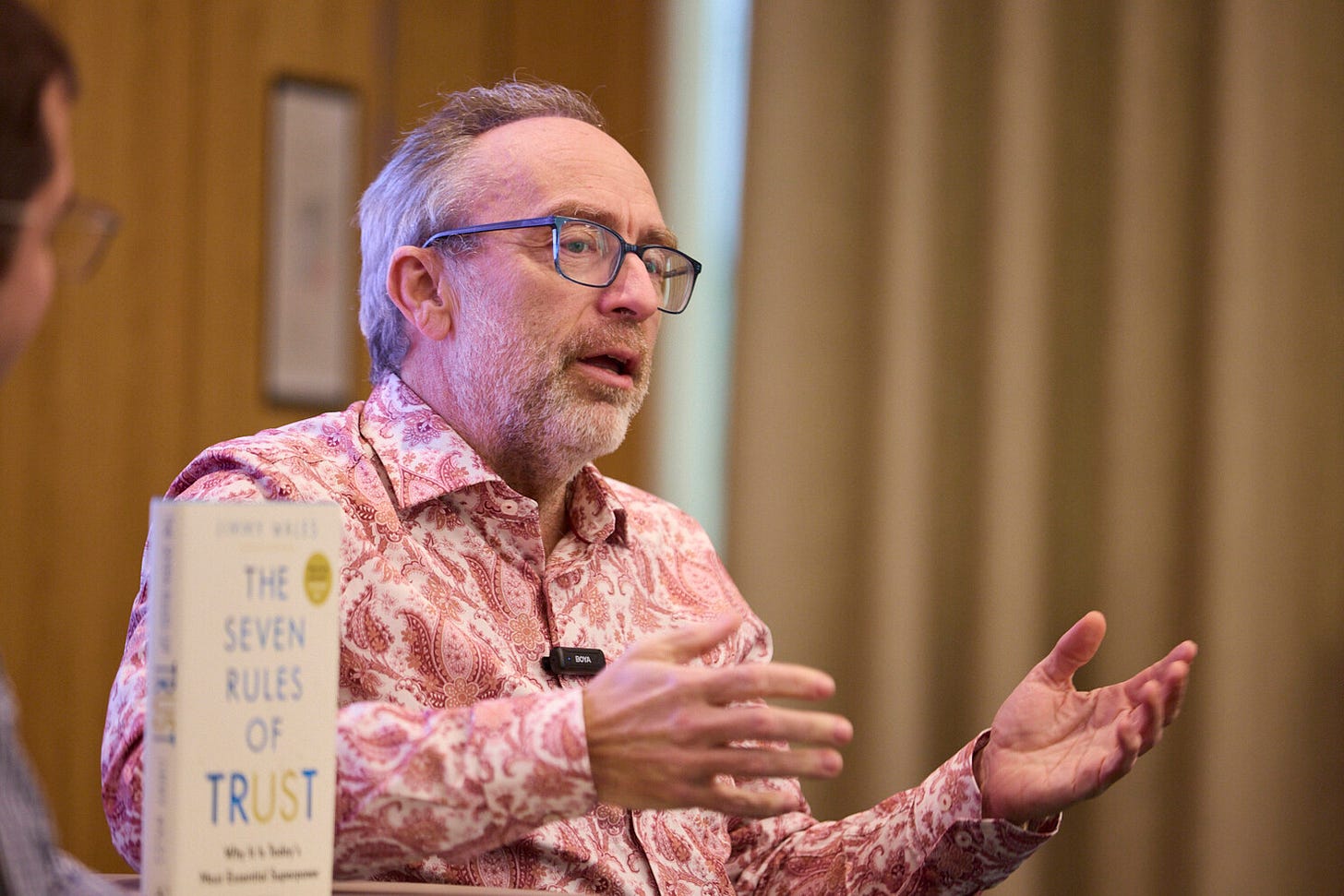

Recently, I sat down with Jimmy Wales for a wide-ranging conversation about trust: how it’s built, how it’s lost, and how it might be regained.

As an amateur photographer, the first time I saw the Milky Way was on my honeymoon; as my eyes adjusted to the sky, this glowing streaky beast emerged, and I genuinely lost my balance in a moment of awe. Little did I know the Milky Way and Wikipedia have something profound in common – 300 billion – stars and page views alike.

It’s a godly reminder of the significance of Jimmy Wales’ achievement in founding Wikipedia and the enormous effort of volunteers to build the internet’s largest freely accessible bank of knowledge. He is one of the original internet entrepreneurs, and after the inventor of the World Wide Web, Tim Berners-Lee, I would argue the most important. After all, it was Socrates who said, “Knowledge will make you be free”.

Jimmy’s contemporaries include the likes of Jeff Bezos, Elon Musk, and Larry Page – but the philosophical mission of the Wikimedia Foundation that “open access to knowledge is a fundamental right, and a driver for social and economic development” means becoming a billionaire ‘tech bro’ was never part of the objective for Jimmy. As we now find ourselves in a world full of volatility and violence, the efforts of Wikimedia and Wikipedia have never been more important.

Trust is the currency of the internet. Especially in an online environment that has been dominated by rampant ‘AI slop’ across social media, profound misinformation and disinformation, and other traditional communications sources are at an all-time trust low… even the BBC. It can be difficult to know where to turn, but the reassuring words of Jimmy is a good start.

His new book, The Seven Rules of Trust, reveals the key principles that led to Wikipedia’s success. He kindly agreed that I could interview him at Kekst CNC to explore what building Wikipedia can teach us about managing the trust of our organisations.

Purpose, process, and people

When Wikipedia launched, it was a revolutionary idea – an open wiki that anyone could edit. It succeeded only because of a clear purpose and a consistent process.

“With Wikipedia, one of the things that’s been very helpful… is that we have a very simple purpose: to create a free, high-quality, neutral encyclopedia. And that purpose defines everything we do, and defines the work, and defines the rules, and defines everything.”

This clarity of mission is not just a branding exercise. It’s the backbone of Wikipedia’s community management, guiding decisions about what behaviour is acceptable and what is not. “Have a good purpose. That central purpose has really helped a lot, and a lot flows from that,” Jimmy explains.

But purpose alone is not enough. The process matters too. Wikipedia’s rules, such as “no personal attacks”, are designed to foster civility and constructive disagreement.

“Unlike in social media, where personal attacks are kind of, like, what most people think it’s for, right? We said, no, no, no, you shouldn’t… we shouldn’t be attacking each other, you shouldn’t be attacking even people we’re writing about… it’s just not what we’re here for.”

This approach is not without its challenges. Jimmy is candid about the difficulties of managing a large, diverse community.

“The hard part is the person who is a really good contributor in terms of contributing good quality content, and they’re being massively rude to a bunch of people… At some point, you just have to say, yeah, look, sorry, if you can’t stop attacking people, you have to leave.”

Forgiveness and second chances are part of the culture, but so is accountability. “You shouldn’t get blocked or banned from the website for your first transgression, so there’s a lot of forgiveness… but there’s also a social expectation… if you’ve been horrible, you should apologise.”

Evidence and neutrality

If trust begins with purpose and process, it is sustained by evidence and neutrality. Jimmy is a stickler for reliable sources and balanced presentation.

“Read up on what counts as a reliable source… how you can manage to say, actually, this is an error, or this is not the full story… the typical kind of case would be, like… this is a criticism that’s out there, but you haven’t mentioned the company’s response, which was published here. That needs to be incorporated, and usually that gets pretty good results.”

He is clear-eyed about the limits of reputation management though.

“It’s not somewhere I’m going to be able to turn this into a PR puff piece, but I can make sure that if there’s facts, they’re presented correctly, there’s two sides of the story, we’ve got both sides, all that kind of stuff. That’s kind of the best you can do...”

This commitment to neutrality extends beyond Wikipedia to the wider media landscape. With large amounts of polarisation, Jimmy argues, the restoration of trust depends on a return to “old-fashioned journalistic neutrality, objectivity.”

AI tokens, hallucinations, and attribution

No conversation about trust in 2025 is complete without a discussion of AI. Jimmy is both fascinated and wary of the technology’s impact on knowledge and credibility.

He offers a technical critique of how Generative AI works:

“The way that it works is it’s always choosing the next token or the next word to be the most probable word, with a little randomness thrown in, which is why it gives different answers at the right time. And so, writing the most plausible thing isn’t the same thing as writing the most true thing, and so what you get is hallucinations. It goes down a path, and then it just keeps making stuff up because it sounds plausible and great, and it’s just completely wrong.”

He is adamant that AI is not yet ready to replace human editors. Whilst AI can write text that sounds convincing, it’s not the same as writing factually correct articles. The immediate opportunity for AI is to support but not supplant human judgment.

“I’ve written a little program… I feed in a short article, and I feed in the five sources, and I ask the question, is there anything in the sources that isn’t in Wikipedia, but should be? Or is there anything in the Wikipedia entry that is not supported by the sources? And it’s actually kind of okay… If a human could check it, like, that could be quite interesting, and it could help our community be more productive and so forth.”

But the risks are real. “The Wikimedia Foundation shouldn’t be using AI to produce content that’s shown directly to readers without humans checking it first. Because the risk is so high, because we’re quite particular about how things are done.”

Economics of trust

The rise of AI has not just changed how information is produced, but also how it is consumed. Jimmy is frank about the impact on Wikipedia’s traffic and infrastructure. “... there was a blog post recently that we’ve seen an 8% decline in visits from humans. That doesn’t mean a decline in traffic... we’ve seen an increase in visits from bots. So that’s concerning, but not, like, a panic case.”

The proliferation of bots crawling Wikipedia has real costs.

“The AI companies are crawling Wikipedia quite heavily, and it’s not just the big ones, but all kinds of startups and things like that. And they’re hammering our servers… So it turns out it’s a disproportionate hit on our costs, and because we’re a charity…”

For Jimmy, the key is attribution. “Our business model, we’re a charity business model, is not page views or ads. So losing page views doesn’t really matter. What matters is, as long as the public knows the information comes from us. In cases where they don’t know, you know, like, that would be a sad outcome.”

Anonymity and pseudonymity

Jimmy’s views on identity in online communities are nuanced and practical.

“In Wikipedia, when you go to sign off in Wikipedia to create a username, username can be anything. JoeBob123 or whatever, and people go by that. There’s a really very well-known Wikipedian called New York Brad. He’s one of the most respected Wikipedians. I happen to know he’s a partner in a law firm in New York, and his name is not Brad. But he’s a great guy. And people don’t know his real name… But the point is, he edits under the same identity. And so, in Wikipedia, your pseudonym is your reputation.”

Reputation is earned through consistent behaviour. “People do want to build a good reputation, because if you don’t have a good reputation, people don’t listen to you, and they get reverted, and so on and so forth.”

He distinguishes between pseudonymity and anonymity. “In some contexts, I think a real names policy is super important. So one of the examples, the case study in the book, is Airbnb… That’s an area where real names and real identity really matters. In other contexts, pseudonymathy is enough, as long as you’ve got a reputation that builds over time.”

He is critical of true anonymity, especially on platforms like Twitter (now X).

“Most of the times, if I go on… I post, it doesn’t matter what you post, so if I look, here’s my recipe for chocolate cake, you know, somebody’s gonna yell at you. Typically, I’ve never heard from them before, I’ll never hear from them again… When somebody’s behind an anonymous shield. They can be a juror for whatever reasons motivate them, and that’s that. And you’re never going to see them again, and it’s toxic.”

Trust as a collective endeavour

Jimmy’s reflections remind us that trust is not a static asset, but a dynamic process - built through purpose, process, evidence, and engagement. With the growth of AI and increase polarisation, the challenge is not just to find trustworthy sources, but to become trustworthy ourselves. Whether you’re a journalist, a communications professional, a business leader, the lessons from Wikipedia’s journey are clear: clarity, civility, evidence, and openness are the pillars on which trust is built. The rest is up to us.

This resonates. Neutrality + receipts beat personality every time. In a world full of AI noise, boring consistency is suddenly a superpower :)

Jimmy Wales' insights will be invaluable as Wikipedia has been fighting misinformation since day one.